gitlab-ag - Combining Git and Autograding

Planned in December 2014 and first released in January 2015, gitlab-ag is a project that aims to enhance GitLab, an open-source GitHub-like system, for educational use. The primary goal is to facilitate batch operations on GitLab and integrate automated grading mechanism, thereby replacing the AutoGrader system used in the past. It runs as a standalone website that manipulates GitLab API.

GitHub Repository: https://github.com/xybu/gitlab-ag

Documentation: https://github.com/xybu/gitlab-ag/blob/master/README.md

License: GPLv2

Features

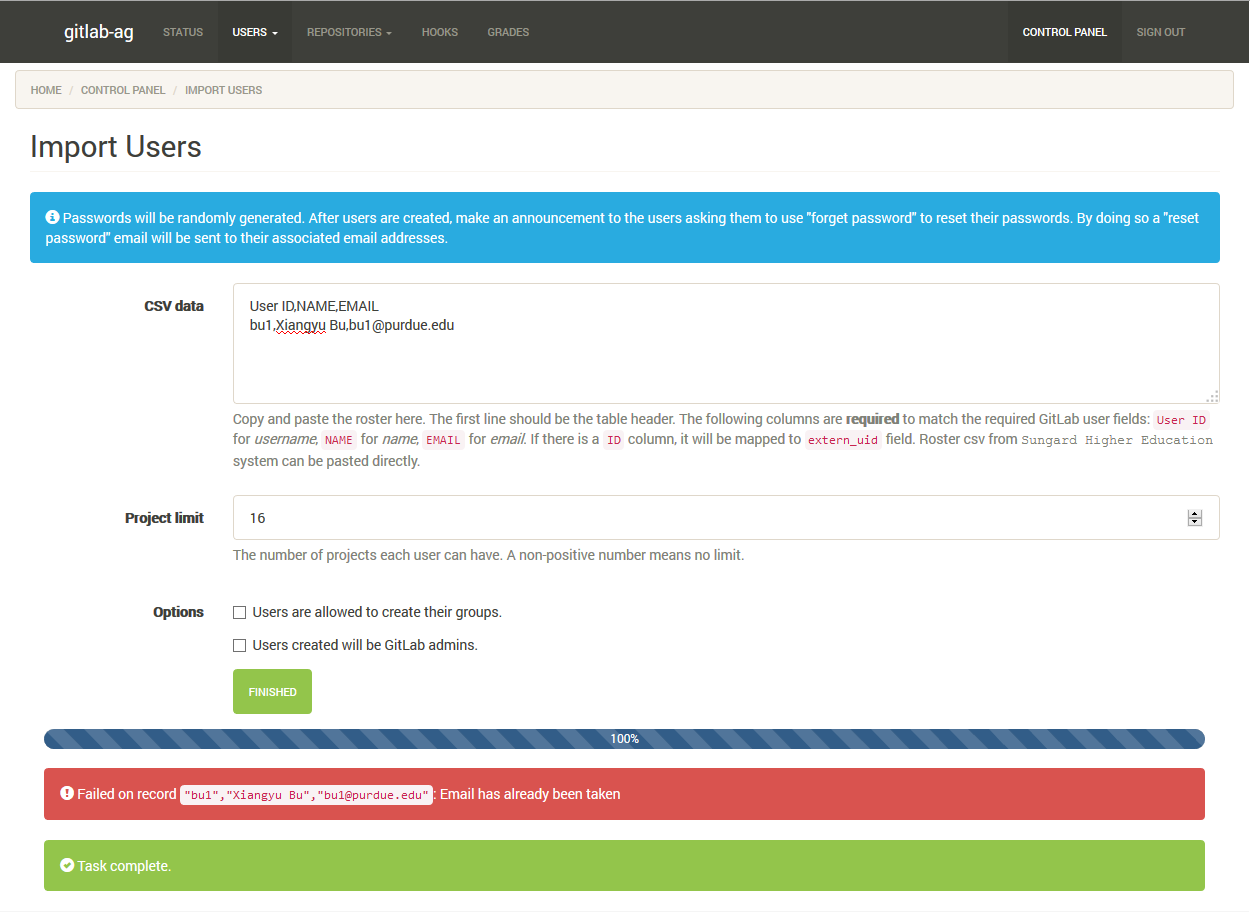

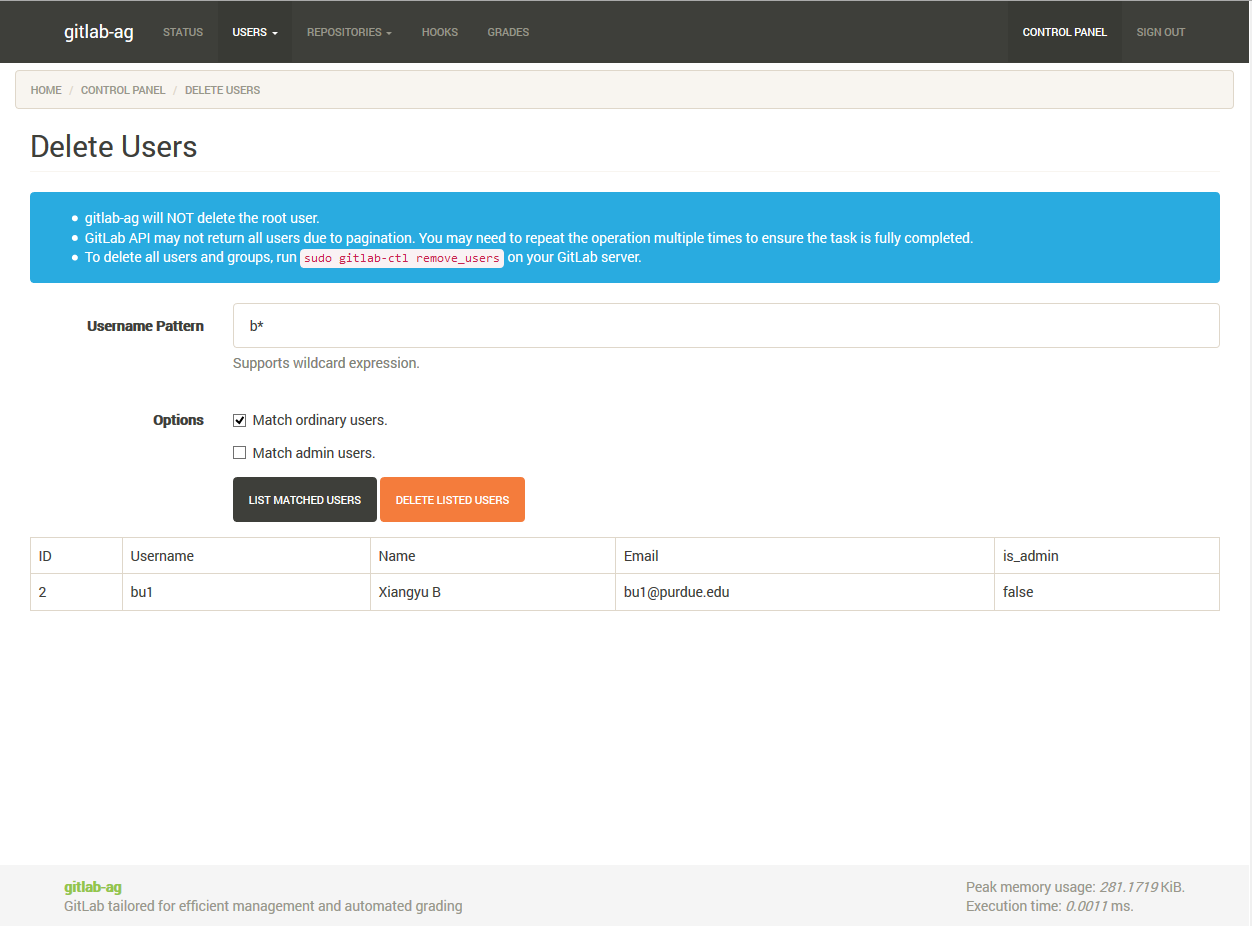

- Import / delete users in batch: import students (GitLab users) from CSV when a new semester starts, and delete all users after semester ends.

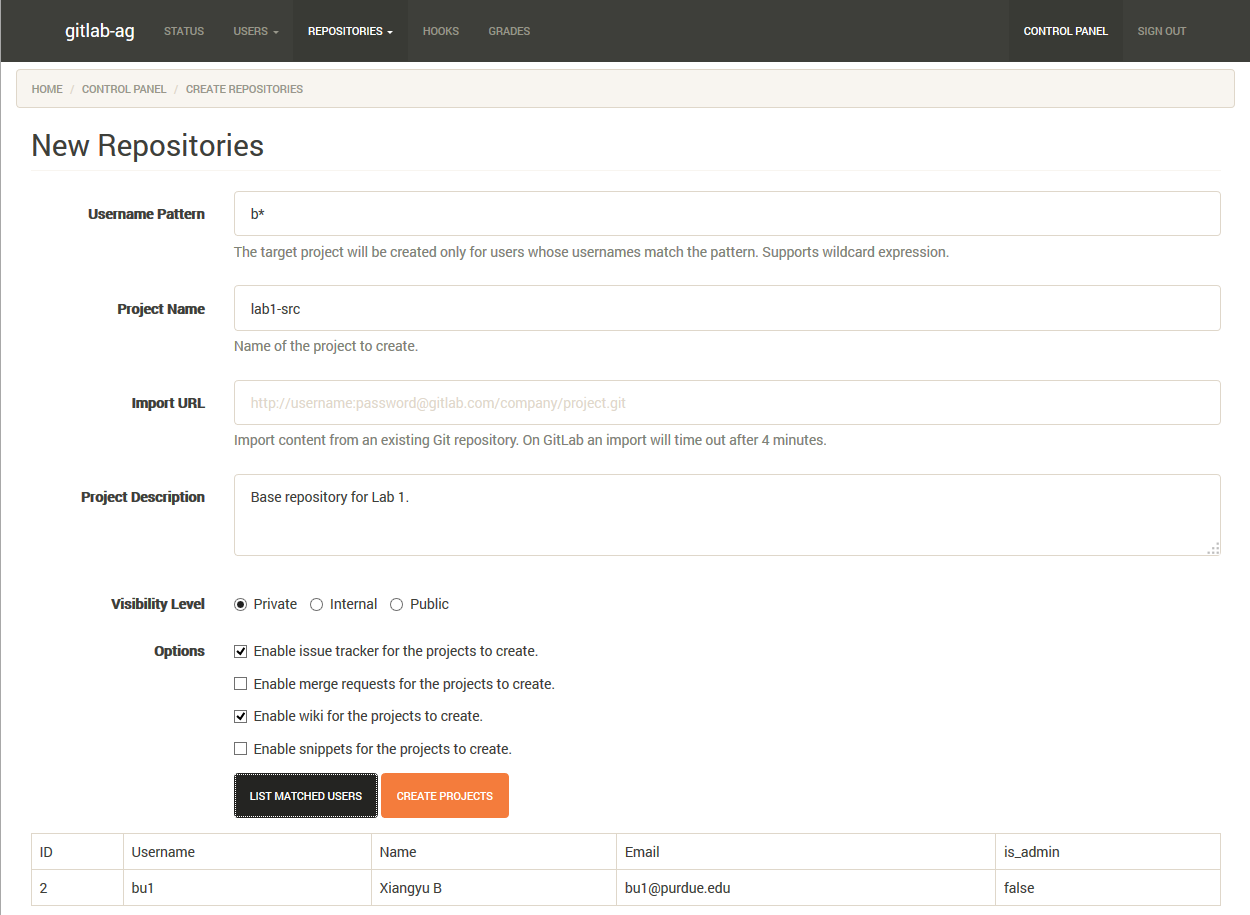

- Create / clone new projects in batch: create new projects of same parameters for (any set of) users, optionally cloning from an existing git repository.

- Backup at push: so a designated directory will always have the latest copy of monitored projects in GitLab.

- System event logging and notification: notify instructors if a GitLab system event is emitted.

- Automated grading: grading the submission after student pushes code to GitLab.

gitlab-ag is built on top of PHP 5.5 with no framework involved. Some auto-grading delegates are written in Python 3k.

Gallery

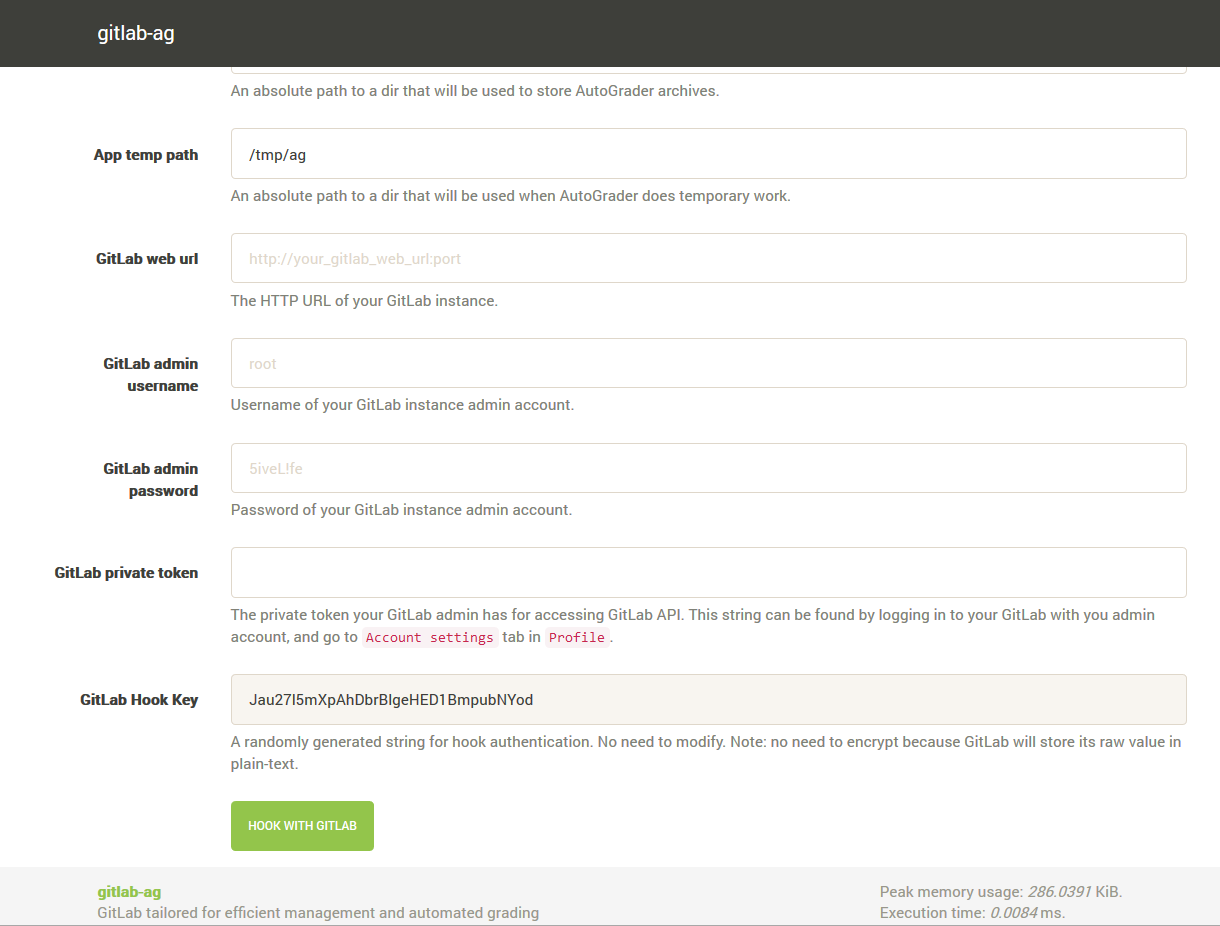

Installation page of gitlab-ag.

Import users from CSV content to GitLab

Delete users by pattern

Add new repository (clone skeleton code repo) for students

Why play Git?

Based on my observations as a teaching assistant for Purdue CS240 course, using Git to manage student submissions makes it much easier to track their progress and detect cheating. By asking students to continuously git commit their progress on assignments and counting this progress as part of the grade, students have less incentive to risking their grade copying the source code of others. For instructors, analyzing cheating cases becomes much easier thanks to the commit log. The professor and I agreed that both students and instructors would be better off with full experience of Git. So we picked GitLab as the base, and developed gitlab-ag to add extra features we want.

Why autograding?

With standardized environment, streamlined and sandboxed execution of untrusted code (sorry to say this, but we as instructors cannot trust any student program unless we have read its source code carefully), and automatic generation and notification of grades, grades become less biased. Instructors have more time to help students, and students can know their grade shortly after their submission is graded.

Autograding is a black-box test procedure, no matter how much code to check student code (e.g., check what functions student program calls, check if the student program uses libraries that arenΓÇÖt allowed, etc.) is written. To mitigate the drawbacks of black-box testing, we also inspect student code for some cases.

Improvements from AutoGrader

There are two noticeable changes from the last update of AutoGrader project:

The API is simplified so that the test runner can be written in any language besides Python. The output format is up to the test runner as long as the autograder-readable key tag is provided.

The whole test runs inside Docker container; the whole grading session is virtualized, not just one command. The old isolation tool based on User-mode Linux (UML) is now abandoned.